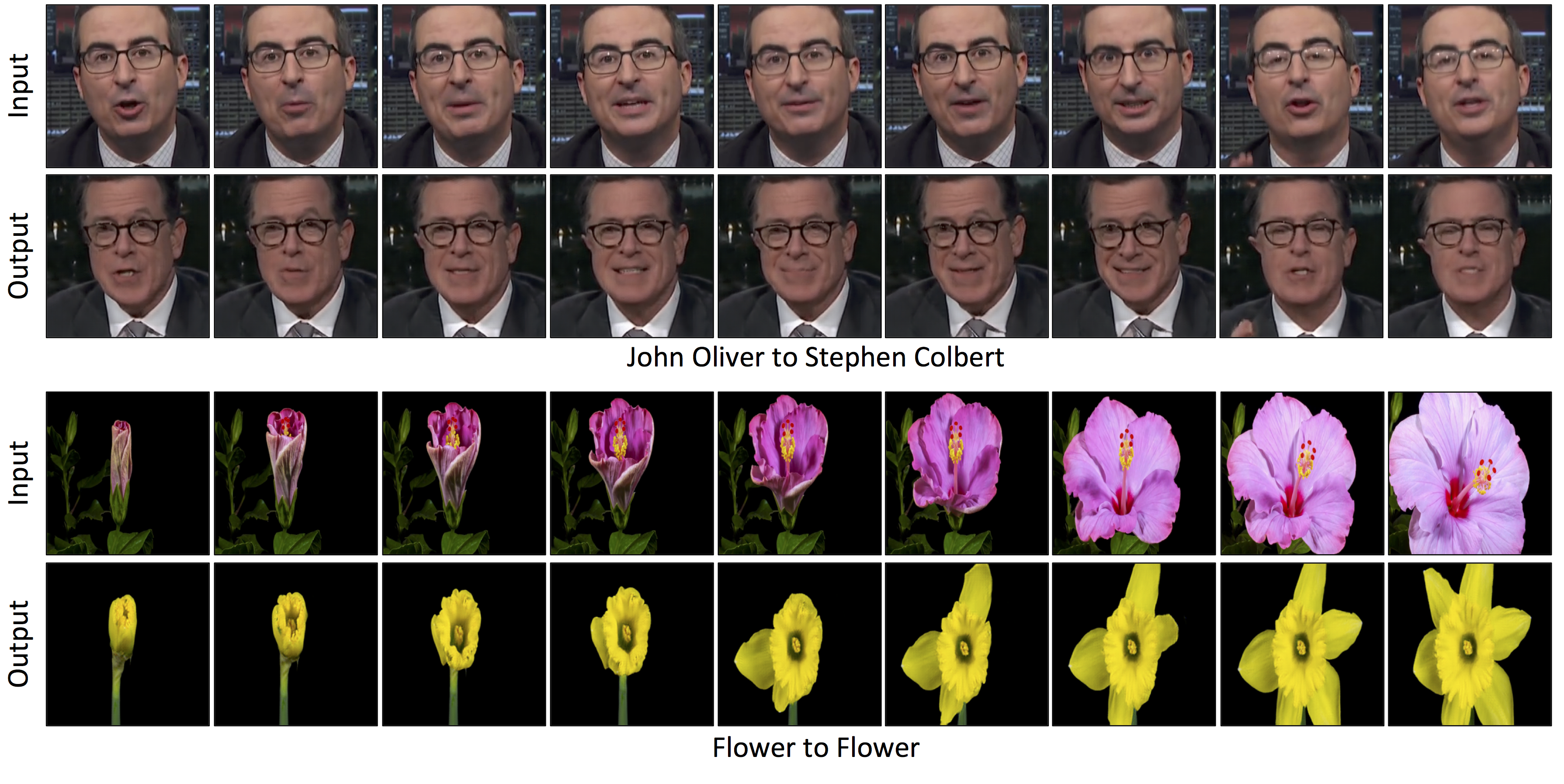

Researchers at Carnegie Mellon University have created a method to turn one video into the style of another. While this might be a little unclear at first, take a look at the video below. In it, the researchers have taken an entire clip from John Oliver and made it look like Stephen Colbert said it. Further, they were able to mimic the motion of a flower opening with another flower.

In short, they can make anyone (or anything) look like they are doing something they never did.

“I think there are a lot of stories to be told,” said CMU Ph.D. student Aayush Bansal. He and the team created the tool to make it easier to shoot complex films, perhaps by replacing the motion in simple, well-lit scenes and copying it into an entirely different style or environment.

“It’s a tool for the artist that gives them an initial model that they can then improve,” he said.

The system uses something called generative adversarial networks (GANs) to move one style of image onto another without much matching data. GANs, however, create many artifacts that can mess up the video as it is played.

In a GAN, two models are created: a discriminator that learns to detect what is consistent with the style of one image or video, and a generator that learns how to create images or videos that match a certain style. When the two work competitively — the generator trying to trick the discriminator and the discriminator scoring the effectiveness of the generator — the system eventually learns how content can be transformed into a certain style.

The researchers created something called Recycle-GAN that reduces the imperfections by “not only spatial, but temporal information.”

“This additional information, accounting for changes over time, further constrains the process and produces better results,” wrote the researchers.

Recycle-GAN can obviously be used to create so-called Deepfakes, allowing for nefarious folks to simulate someone saying or doing something they never did. Bansal and his team are aware of the problem.

“It was an eye opener to all of us in the field that such fakes would be created and have such an impact. Finding ways to detect them will be important moving forward,” said Bansal.